Update: Since first writing this post, I’ve actually updated the web app in question to look a little nicer and even work on smaller screens, so the video demo in this post won’t match what you’ll see if you follow the links to try it yourself. The basic code running everything remains the same though.

So a while ago, I stumbled onto this post on the TensorFlow blog, talking about the release of a JavaScript version of the PoseNet machine learning model for real time human pose estimation that can run in a browser using a webcam. I noted it at the time as we were learning about motion capture in Biomechanics during my undergrad Sports Therapy course, and I was curious if this could be used as a form of markerless motion tracking, so I stored it away in the back of my mind.

Somewhat later, in third year, we had to take a Business Studies module. The one and only assessment for this module involved creating a business pitch for a new business of our choosing, but ideally related to our field of study. We were encouraged by our lecturer to add some ‘wow factor’ to the pitch by including something like a video or, even better, a live demonstration of our product.

The idea struck me to go back to PoseNet and create a business around delivering in-browser biomechanical analysis of movements, that sports therapists could use to assess patients remotely. All the patient would need to do is point their webcam at themselves while moving and the therapist could get a report on joint or segment angles to assess ranges of motion! I knew if I could get a demo of this working the way I wanted, it would definitely provide that ‘wow’ my lecturer was after.

One small problem - I’d never really done anything in JavaScript, never mind with machine learning. Hell, at that point I was still rusty with my HTML/CSS alone, having not done anything web related for half my life.

Fortunately for me, I’d also recently stumbled on to the Coding Train YouTube channel (seriously, the amount of stumbling onto exactly the thing I need on the web is kind of bizarre). Coding Train had recently uploaded some videos on ‘friendly’ machine learning models using something called ml5, including this one on Posenet that gave me exactly the base I needed to start. In fact, the example code for PoseNet with a webcam using ml5.js and p5.js is nearly entirely intact in my final code - I just built a couple things on top of it. I can heartily recommend both Coding Train and ml5 for anyone looking to start playing with some really interesting machine learning models!

I didn’t have time to build much, so I focused on one movement - the squat - and built a simple single page app that takes the pose info from PoseNet and adds a couple extra bits of maths to present some common biomechanical markers, before logging them to a little dashboard. Here’s an example video:

Please excuse my general shabby state in the video - I was in full Covid-19 lockdown mode at the point this was recorded.

The PoseNet and skeleton-drawing (the white lines and dots overlaying the video) code was already there from ml5, so now I had to figure out how to get the joint and segment angles. I had the x and y coordinates of each white dot representing a joint, so I figured there must be a way to translate this into angles.

I’m no great mathematician, so a little google-fu found me this StackOverflow answer that gave me what I needed. I’d used atan2 in R for calculating resultant forces when I was resultant forces, so I was familiar with how it worked, and a little extra calculation allows you to translate the points in such a way that the ‘joint’ in our case becomes the equivalent of 0, 0 in a coordinate system. Here’s an example of this applied to knee flexion, where poses[0]... is the data coming from PoseNet:

knee = poses[0].pose.leftKnee;

hip = poses[0].pose.leftHip;

ankle = poses[0].pose.leftAnkle;

kneeFlexion = (

Math.atan2(

ankle.y - knee.y,

ankle.x - knee.x

)

- Math.atan2(

hip.y - knee.y,

hip.x - knee.x

)

) * (180 / Math.PI);

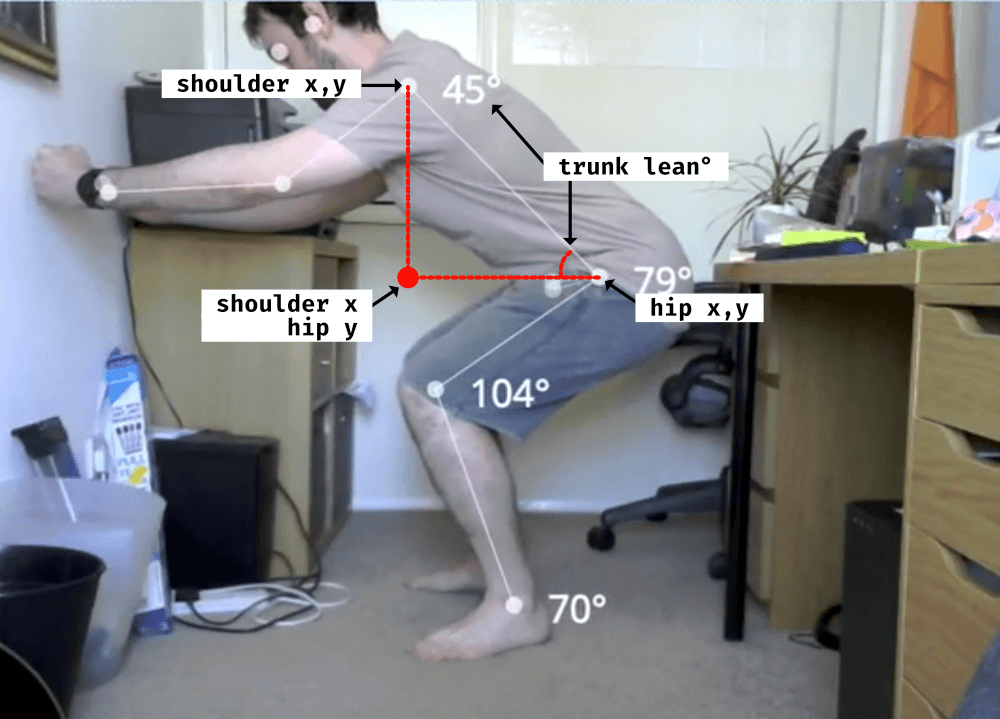

One interesting conundrum was calculating shin and trunk angle, given that these needed to be calculated from a fixed external point (an absolute angle in biomechanics terms) - ideally the floor or a wall. And, I still needed those three points to work with atan2 as in the example above. I ended up creating an imaginary third point, using a combination of the x coordinate from the most superior joint (shoulder for trunk, knee for shin) and the y coordinate from the inferior joint (hip, ankle) to project a third point out into space that moved along with these joints and created a nice right angle triangle. I could then, essentially, use the sides of this triangle as the external reference for these angles.

Here’s an example illustration for trunk lean:

And the code:

hip = poses[0].pose.leftHip;

shoulder = poses[0].pose.leftShoulder;

imaginaryPoint = { x: shoulder.x, y: hip.y };

trunkLean = 360 - (

Math.atan2(

imaginaryPoint.y - hip.y,

imaginaryPoint.x - hip.x

) - Math.atan2(

shoulder.y - hip.y,

shoulder.x - hip.x)

) * (180 / Math.PI);

It’s not a perfect system, but it’s acceptable enough!

From there, it was just a case of logging the number reached to a variable and outputting that to the HTML, updating it only if that variable is exceeded - logging the maximum knee flexion achieved, for example. Just to be fancy, I threw in a button to switch sides (which just means changing poses[0].pose.leftKnee to poses[0].pose.rightKnee) and a button to snapshot the current video output and download it (using p5 saveCanvas).

For a first time adventure in JavaScript, this was probably jumping in at the deep end, but I’m pleased with the outcome (and it must have achieved that wow factor I was after, as I got a pretty great mark on the assessment). You can view the (probably quite ugly) code on GitHub and even try it for yourself here.

Once again, check out ml5 and Coding Train for more machine learning model goodness!